Introduction

Machine Learning is changing the way we see the world around us. From weather prediction to medical diagnosis, from content recommendations on streaming platforms to financial fraud detection, Machine Learning is increasingly present in our daily lives.

But what exactly is it, and how does it work? In this post, we will explore the fundamental concepts of Machine Learning and see how it can be used to solve real-world problems. We will also look at how to get started with Machine Learning, what resources are available, and how to use this technology to improve both work and everyday life.

Caveat: This article is a simple introduction to a vast subject. It is written for anyone who wants to understand the basic concepts of Machine Learning, without requiring advanced technical or mathematical knowledge. At the end of the post, we provide a set of useful resources for anyone who wishes to explore the topic further and continue what is a truly fascinating journey.

What We’ll Cover

What Is Machine Learning

Machine Learning, or automated learning, is a technology that allows machines to “learn” from data and improve their performance without being explicitly programmed. In other words, Machine Learning enables machines to “learn” from experience, just as humans do.

There are two main types of Machine Learning: supervised Machine Learning and unsupervised Machine Learning.

In supervised Machine Learning, the model is “trained” on a dataset that includes examples of both inputs and desired outputs. The model then uses these examples to make predictions on new data. In unsupervised Machine Learning, the model must “discover” on its own the structures and relationships within the data, without being guided by pre-defined examples.

Machine Learning is used across a wide range of applications, from weather prediction to medical diagnosis, from content recommendations to financial fraud detection. In general, the goal of Machine Learning is to automate decisions and predictions based on data, improving the efficiency and accuracy of the process.

Types of Machine Learning: Supervised and Unsupervised

As we have already seen, Machine Learning can be divided into two main categories: supervised Machine Learning and unsupervised Machine Learning.

Supervised Machine Learning is the most common type of automated learning and is based on a set of already labeled data. In other words, the model is “trained” on a dataset that includes examples of both inputs and desired outputs. The model then uses these examples to learn to make inferences on new data. For example, a spam classifier could be trained on a set of emails labeled as “spam” or “not spam,” and then used to classify new incoming emails.

Unsupervised Machine Learning, on the other hand, is based on a set of unlabeled data. In other words, the model must “learn” on its own to discover structures and relationships within the data. A typical example of this type of learning is clustering, where data is divided into groups (clusters) based on their similarities.

In general, we can say that supervised Machine Learning uses labeled data to make predictions or classifications, while unsupervised Machine Learning uses unlabeled data to make discoveries or identify relationships within the data.

Main Supervised Learning Algorithms

The main supervised Machine Learning algorithms are:

- Linear Regression: used for quantitative predictions on a continuous variable. For example, predicting the price of a house based on its square footage.

We have written dedicated posts on this topic, which may be very helpful for a proper understanding:

Correlation and Regression Analysis

Multiple Regression Analysis - Logistic Regression: used for categorical variable predictions, i.e., when the output is one class among two or more possibilities. For example, predicting whether a patient has a certain disease or not.

- Decision Trees: used for both classification and regression. They consist of a decision graph where each node represents a decision and each branch represents an outcome.

- Random Forest: a variant of decision trees where multiple trees are used to make predictions, and then the average of the trees’ predictions is used.

- Gradient Boosting: an algorithm that uses a series of decision trees in succession to improve predictions.

- Support Vector Machine (SVM): used for classification when the data is linearly separable.

- k-Nearest Neighbors (k-NN): used for classification based on the similarity of data points relative to a reference point.

- Naive Bayes: used for probability-based classification.

Main Unsupervised Learning Algorithms

- Clustering: used to divide data into groups or clusters based on their similarities. The most common clustering algorithm is k-means.

- Principal Component Analysis (PCA): used to reduce the dimensionality of data, that is, to transform a set of correlated variables into a set of uncorrelated variables.

- Density-Based Spatial Clustering (DBSCAN): used to find clusters based on the density of data points.

- Association Rule Mining (Apriori, FP-Growth): used to find association rules between variables.

- Anomaly Detection Algorithms (One-class SVM, Isolation Forest): used to detect elements that deviate from the norm.

- Self-Organizing Maps (SOM): used to visualize hidden structures in the data.

- Structure Detection Algorithms (Spectral Clustering, Hierarchical Clustering): used to find hierarchical relationships in the data.

These are some of the main unsupervised Machine Learning algorithms, but there are many others. As with supervised Machine Learning, the choice of algorithm depends on the characteristics of the specific problem and the nature of the data.

In practice, choosing the right algorithm for a specific solution is a critical decision that can determine the success or complete failure of a data analysis effort.

The Main Phases of the Machine Learning Process

- Data Collection: The first phase consists of gathering the data needed for the problem to be solved. This data must be cleaned, formatted, and prepared for processing.

- Data Analysis: Once the data has been collected, it is important to explore it in order to better understand the problem and identify any interesting relationships or characteristics.

- Model Selection: The next phase consists of choosing the most appropriate Machine Learning model for the problem at hand. There are many available algorithms, including decision trees, neural networks, and support vector machines (SVM).

- Model Training: Once the model has been selected, it must be “trained” using the training data. This process allows the model to “learn” from the data and become capable of making predictions on new data.

- Model Evaluation: Once trained, the model must be evaluated on a test dataset to verify its accuracy.

- Model Deployment: If the model has shown good performance, it can be used to solve the problem in question and deployed to a production environment.

- Monitoring and Maintenance: The model must be monitored to ensure it continues to function correctly and, if necessary, updated or replaced if performance declines.

Getting Started with Machine Learning: Tutorials and Resources

Machine Learning is a rapidly evolving field, and there are many resources available for those who want to get started. Any list is necessarily incomplete and subject to personal preferences, but here are some good starting points:

Tutorials: There are numerous tutorials available online that cover the basics of Machine Learning. For example, the scikit-learn data science website has a tutorial section that explains how to use the library to build some of the most common models.

https://scikit-learn.org/stable/tutorial/index.html

Books: There are many books on the subject, but some of the classics in the field include:

“Introduction to Machine Learning” by Alpaydin: https://www.amazon.com/Introduction-Machine-Learning-Adaptive-Computation/dp/0262028182

“Python Machine Learning” by Raschka and Mirjalili: https://www.packtpub.com/data/python-machine-learning-third-edition

Online Courses: There are many online courses that cover the basics of Machine Learning, such as the excellent course by Andrew Ng on Coursera:

https://www.coursera.org/learn/machine-learning

or the Machine Learning course by fast.ai:

https://www.fast.ai/

Tools: There are many tools and libraries that can be used to explore data and build models. Some of the most popular include:

scikit-learn: a Machine Learning library for Python

https://scikit-learn.org/stable/

TensorFlow: a Machine Learning library developed by Google

https://www.tensorflow.org/

Keras: a high-level interface for building neural networks in TensorFlow

https://keras.io/

PyTorch: an open-source Machine Learning library developed by Facebook

https://pytorch.org/

In general, we recommend starting with tutorials and online courses to become familiar with the basic concepts, and then continuing with books and tools to deepen understanding and develop practical skills. To become a good data scientist, it is also important to work with real data and not just tutorials or exercises. Seeking out Machine Learning projects or competitions can help build concrete experience.

Experimenting with Code: Jupyter Lab and Google Colab

Jupyter Lab and Google Colab are both free and powerful tools for data exploration, learning, and testing Machine Learning code.

How can we use both tools to create development environments and share our work with others?

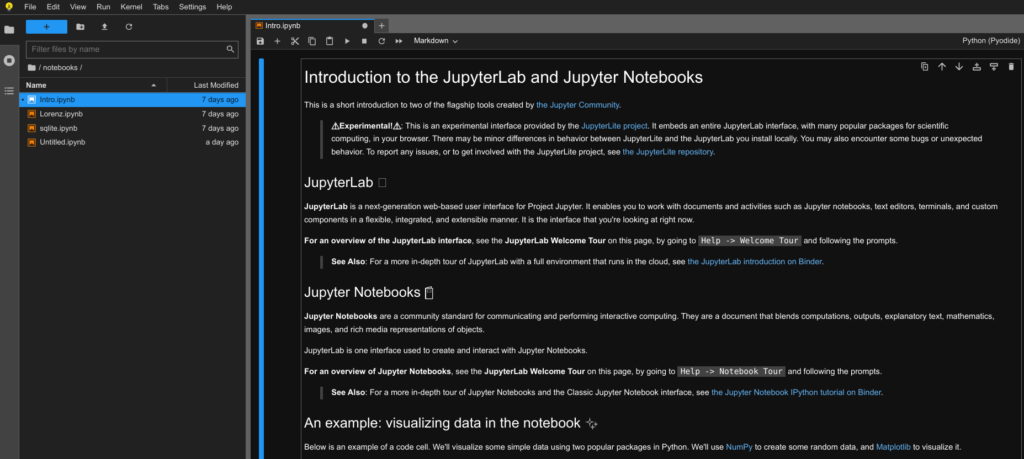

Jupyter Lab is the new interface for Jupyter Notebook that provides an integrated development environment for working with notebooks. It is an interactive development environment that allows us to write, run, and document Python and R code within a web browser. It is particularly useful for data analysis and for learning Machine Learning.

To get started, Jupyter Lab needs to be installed on a local machine. This can be done easily using Anaconda, a Python distribution that includes Jupyter Lab and many other data science libraries. Once installed, Jupyter Lab can be launched from the command line and a new notebook opened to write and run code. Jupyter Lab is available at: https://jupyter.org/

It is also possible to test the environment directly in the browser with JupyterLite:

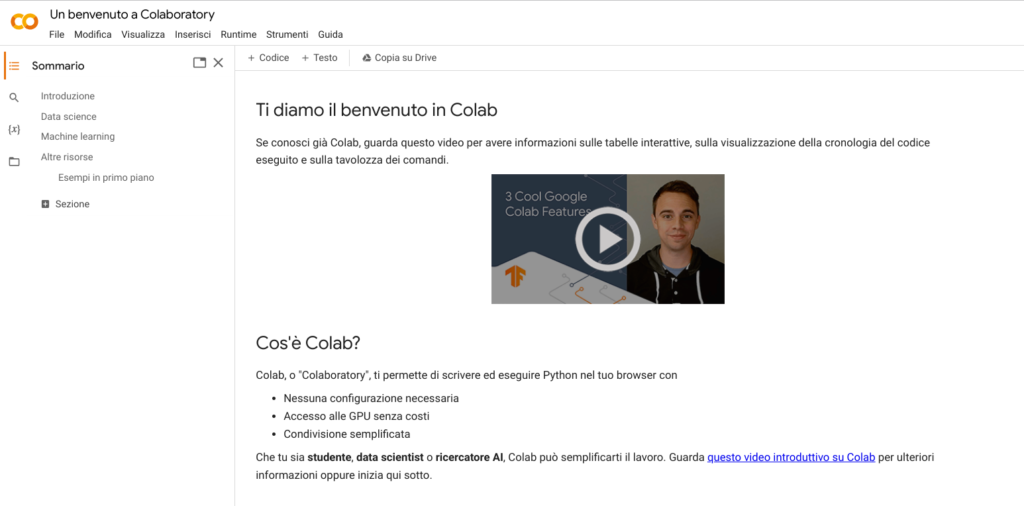

Google Colab is a cloud-based development environment that allows us to write and run Python and R code within a web browser without any installation. It is a very convenient option, because Colab can be accessed from any device with an internet connection and work can be shared with others simply by providing a link. It also allows the use of a GPU or TPU to make computations more powerful. Google Colab is available at: https://colab.research.google.com/

Both tools allow us to create a sequence of cells containing code and text. Code can be executed within cells and the results displayed directly in the notebook. This makes Jupyter Lab and Google Colab ideal for data exploration, Machine Learning learning, sharing, and documenting work.

You might also like

Further Reading

For a solid introduction to the statistical foundations of machine learning—including regression, model selection, and prediction—Introduzione all’econometria by Stock and Watson provides the quantitative framework that underpins many ML techniques. For a hands-on guide to online experimentation and A/B testing—essential skills for deploying ML models in production—Trustworthy Online Controlled Experiments by Kohavi, Tang and Xu is the definitive reference.